What is the EU AI Act? The EU AI Act (Regulation EU 2024/1689) is the world’s first comprehensive, binding legal framework for artificial intelligence, classifying AI systems into four risk tiers — unacceptable, high, limited, and minimal — and imposing graduated compliance obligations. It entered into force on 1 August 2024 and will be fully applicable by 2 August 2026 for most provisions.

This content is for informational purposes only. It does not constitute legal advice. Always consult a qualified legal professional before making compliance decisions related to the EU AI Act.

When I began tracking the EU AI Act negotiations in early 2023, I quickly discovered something most general commentary missed: the Act does not regulate “AI” as an abstract concept. It regulates specific use cases of AI systems, ranked by their potential to harm people. That distinction — use case, not technology — is the single most important thing any organisation needs to understand before assessing its compliance obligations.

I also learned, through months of monitoring draft texts and trilogues, that the phased timeline is deliberate. Legislators knew full enforcement in 2024 was impossible; what they built instead is a staged runway that gives businesses time to prepare — if they pay attention.

TL;DR — Key Takeaways

- The EU AI Act entered into force on 1 August 2024 and is being phased in through 2027.

- It uses a four-tier risk framework: unacceptable (banned), high, limited, and minimal.

- Eight AI practices are completely prohibited as of 2 February 2025.

- Fines for the most serious violations reach €35 million or 7% of global annual turnover.

- The Act has extraterritorial reach: it applies to UK, US, Swiss, and global businesses whose AI systems affect EU users.

Read Also – How to Install, Use & Run OpenClaw — The Complete Setup Guide (2026)

What Is the EU AI Act 2025?

The EU AI Act — formally Regulation (EU) 2024/1689 — is the first-ever comprehensive legal framework on AI worldwide, entered into force on 1 August 2024, and will be fully applicable from 2 August 2026 for most provisions. European Commission

The Act’s core philosophy is risk-proportionality. Rather than banning or tightly regulating all AI, it concentrates its strictest rules on applications that could genuinely harm people’s safety, rights, or livelihoods. AI systems that pose little risk — a spam filter, for instance — face no mandatory obligations at all.

The law emerged from nearly three years of negotiation. Spanning 180 recitals and 113 Articles, it takes a risk-based approach to regulating the entire lifecycle of different types of AI systems — from development and placement on the market through to decommissioning. White & Case LLP

What I Wish I Had Known Earlier: The Act’s scope is defined by outputs, not inputs. An AI system built outside the EU — in the UK, the US, or anywhere else — can still fall under the Act if its outputs are used by people in the EU. Many businesses did not grasp this until late 2024.

EU AI Act Risk Categories: The Four Tiers Explained {#risk-categories}

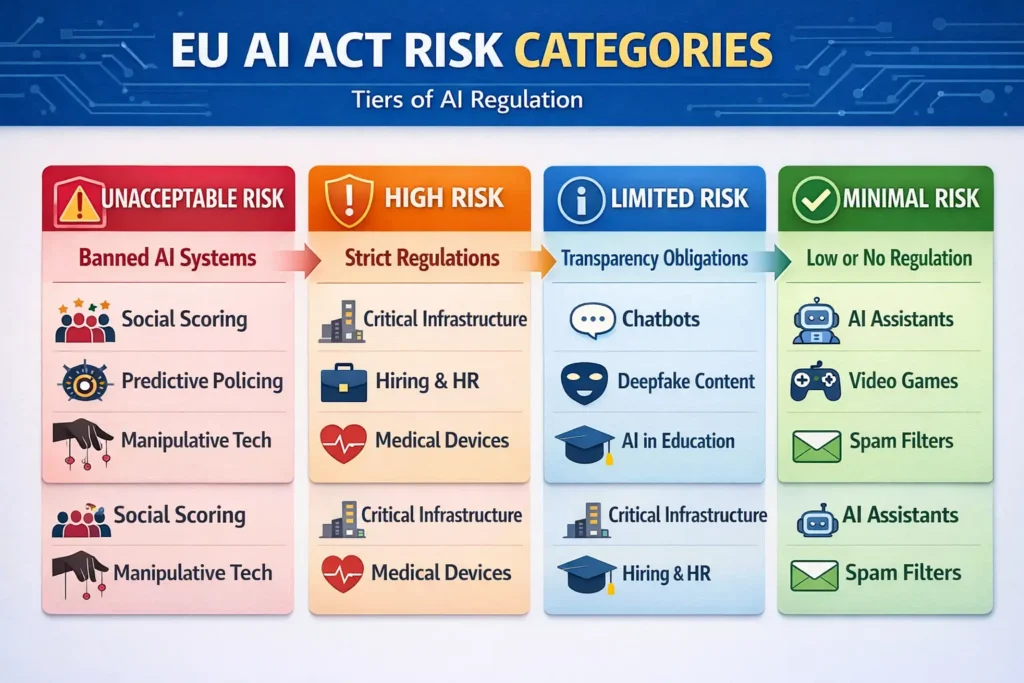

The EU AI Act proposes a risk-based approach to regulating AI systems, with four levels of risk: unacceptable, high, limited, and minimal (or no) risk. Each level is subject to different degrees of regulation and requirements.

Understanding which category applies to your AI system determines almost everything else: your compliance obligations, your deadlines, and your exposure to fines.

| Risk Tier | Status | Examples | Key Requirement |

|---|---|---|---|

| Unacceptable | Banned outright | Social scoring, subliminal manipulation | Do not deploy |

| High | Strictly regulated | Medical devices AI, recruitment screening, biometric ID | Conformity assessment, registration, human oversight |

| Limited | Transparency rules | Chatbots, deepfakes, AI-generated content | Inform users they are interacting with AI |

| Minimal / No Risk | No mandatory rules | Spam filters, AI video games | Voluntary codes of conduct encouraged |

Unacceptable Risk

Unacceptable risk is the highest level of risk and covers eight main types of AI applications incompatible with EU values and fundamental rights. These applications are prohibited in the EU. Deploying them carries the Act’s highest financial penalties.

High-Risk AI Systems

AI systems identified as high-risk are those that have a significant harmful impact on health, safety, and fundamental rights of persons in the EU. These systems must comply with certain mandatory requirements to mitigate risks.

High-risk applications include remote biometric identification, critical infrastructure management, credit scoring, and AI used in education, employment, and border control.

Limited Risk

Limited risk AI systems are subject to lighter transparency obligations: developers and deployers must ensure that end-users are aware that they are interacting with AI — covering chatbots and deepfakes.

Minimal Risk

The AI Act does not introduce rules for AI that is deemed minimal or no risk. The vast majority of AI systems currently used in the EU fall into this category, including applications such as AI-enabled video games or spam filters.

What Does the EU AI Act Regulate?

- The Act regulates AI systems — not AI technologies in general. This EU regulation applies to anyone who makes, uses, imports, or distributes AI systems in the EU, regardless of where they are based. It also applies to AI systems used in the EU, even if they are made elsewhere.

- The Act covers several distinct roles in the AI supply chain. Providers are those who develop or commission AI systems and place them on the EU market. Deployers are organisations that use AI systems under their authority. Importers and distributors carry their own, lighter obligations.

- The Act does not apply to AI systems used for military, defence, or national security purposes, or to AI systems used by foreign public authorities or international organisations for law enforcement and judicial cooperation, provided they protect individuals’ rights.

- A key nuance that generic coverage misses: the Act also regulates General Purpose AI (GPAI) models — like the large language models powering today’s AI assistants. The AI Act rules on GPAI became effective in August 2025. GPAI models that may carry systemic risks must assess and mitigate those risks, and providers must also address transparency and copyright-related rules.

Understanding General Purpose AI Obligations | GPAI obligations under the EU AI Act

What Are the Requirements of the EU AI Act?

Requirements scale with risk. For high-risk AI systems, the obligations are extensive. Providers must maintain a quality management system, produce thorough technical documentation, keep automatic logs of system activity, ensure human oversight mechanisms, and submit to conformity assessment before market entry.

Providers and deployers of high-risk AI systems have a number of obligations, including risk management, human oversight, robustness, accuracy, ensuring the quality and relevance of data sets used, cybersecurity, technical documentation and record-keeping, and the transparency and provision of information to deployers.

For GPAI model providers, the requirements differ depending on whether the model poses systemic risk. All providers of GPAI models that present a systemic risk — open or closed — must also conduct model evaluations, adversarial testing, track and report serious incidents, and ensure cybersecurity protections.

Open-source GPAI model providers benefit from lighter obligations. Free and open licence GPAI models — whose parameters, including weights, model architecture and model usage are publicly available — only have to comply with copyright policy and training data summary obligations, unless the model is systemic.

One compliance requirement that surprises many organisations is the AI literacy obligation. From 2 February 2025, providers and deployers must ensure their staff have sufficient AI knowledge to operate and oversee the systems they use. This is not a vague aspiration — it is a legal duty.

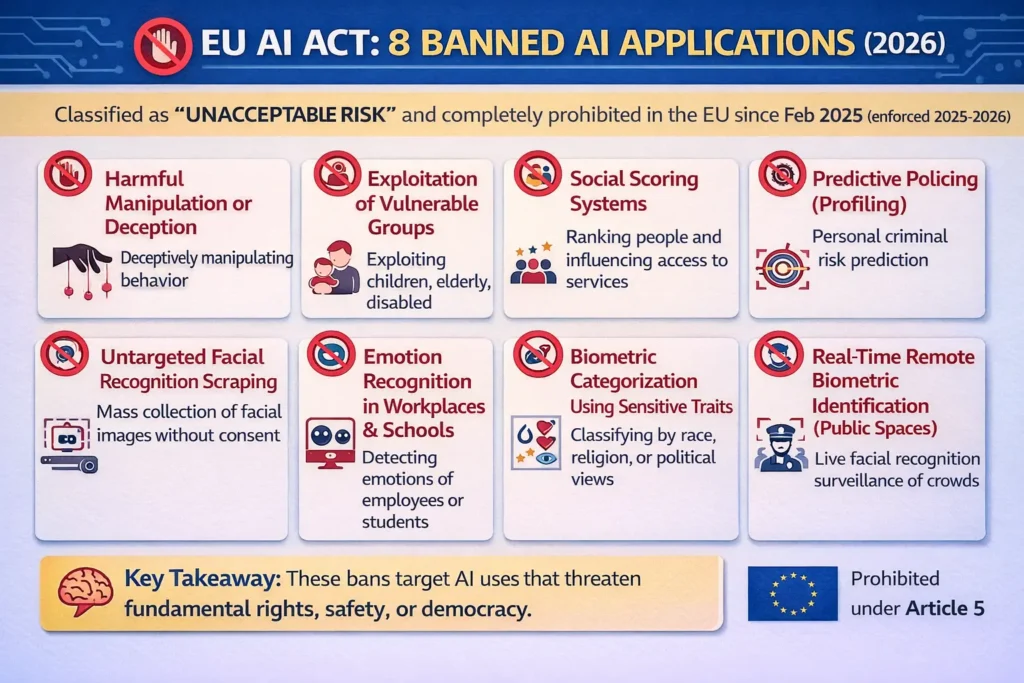

What Practices Are Prohibited Under the EU AI Act?

The AI Act prohibits eight practices, namely those posing a clear threat to the safety, livelihoods and rights of people. These prohibitions came into effect on 2 February 2025.

The eight categories of prohibited AI practices include:

- Subliminal manipulation — deploying subliminal, manipulative, or deceptive techniques to distort behaviour and impair informed decision-making, causing significant harm.

- Exploitation of vulnerabilities — targeting people based on age, disability, or socio-economic circumstances to cause harm.

- Biometric categorisation to infer sensitive attributes — inferring sensitive attributes such as race, political opinions, trade union membership, religious or philosophical beliefs, sex life, or sexual orientation EU Artificial Intelligence Act from biometric data.

- Social scoring by public authorities — government-run systems that rate citizens based on social behaviour.

- Real-time remote biometric identification in public spaces by law enforcement (with narrow exceptions).

- Predictive policing — AI used to predict criminal behaviour based on profiling alone.

- Emotion recognition in workplaces and education — AI systems that detect the emotional state of individuals in situations related to the workplace and education are prohibited.

- Manipulation of children — voice-assisted toys or systems designed to manipulate minors.

Penalties for Non-Compliance with the EU AI Act

What is the penalty for non-compliance with the EU AI Act?

The Act operates a three-tier penalty structure tied directly to the risk level of the infringement.

Penalties now include: up to €35 million or 7% of global annual turnover for infringements relating to prohibited AI practices; up to €15 million or 3% of global annual turnover for infringements of certain other obligations; and up to €7.5 million or 1% for supplying incorrect, incomplete, or misleading information to public authorities.

In every case, the applicable fine is whichever figure is higher — the flat amount or the percentage of global turnover. This means large multinationals face potentially far greater exposure than the headline million-euro figures suggest.

For SMEs and start-ups, the fines for all of the above are subject to the same maximum percentages or amounts, but whichever is lower. This is an important proportionality safeguard for smaller organisations.

The penalty regime for GPAI model providers is slightly deferred. The EU AI Act carves out penalties applicable to providers of GPAI models and postpones these measures until August 2, 2026, aligning them to the enforcement powers relating to GPAI models.

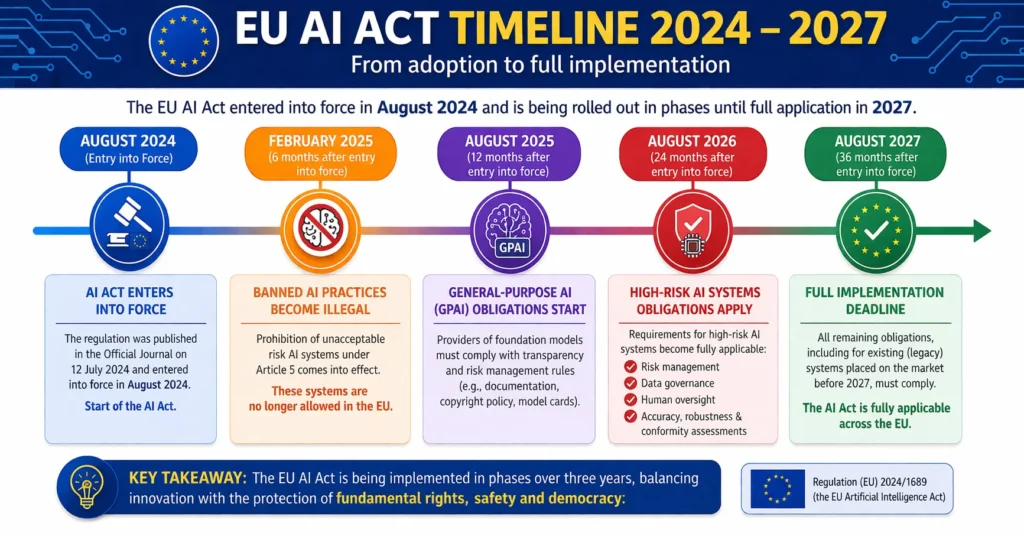

The EU AI Act Timeline: When Does It Take Effect?

When was the EU AI Act passed and when does it take effect?

On July 12, 2024, the EU AI Act (EU Regulation 2024/1689) was published in the Official Journal of the European Union. The text of the law is final and entered into force on August 1, 2024.

The Act operates on a staggered implementation schedule — one of its most practically significant design features.

The phased timeline breaks down as follows: February 2, 2025 (6 months after entry into force): provisions on banned AI systems began applying. August 2, 2025 (1 year after entry into force): provisions on general purpose AI systems started applying. August 2, 2026 (2 years after entry into force): the bulk of obligations, including key requirements for providers of high-risk AI systems, will start applying. August 2, 2027 (3 years after entry into force): obligations for high-risk AI systems that are safety components in regulated products — such as civil aviation and medical devices — will begin applying.

A critical nuance on existing GPAI models: GPAI models that had been placed on the market or put into service before 2 August 2025 need to be compliant with the AI Act by 2 August 2027 EU Artificial Intelligence Act — giving legacy systems an extended runway.

Callout Box — Key Dates at a Glance

📅 12 July 2024 — Act published in the EU Official Journal

📅 1 August 2024 — Act entered into force

📅 2 February 2025 — Prohibited practices ban took effect; AI literacy obligations apply

📅 2 August 2025 — GPAI model obligations and penalty regime activated; AI Office operational

📅 2 August 2026 — Full application for most high-risk AI systems

📅 2 August 2027 — High-risk systems in regulated products must comply; pre-2025 GPAI models must comply

Does the EU AI Act Apply Outside the EU?

Does the EU AI Act apply to the UK, Switzerland, or Northern Ireland?

This is one of the most misunderstood aspects of the Act. The short answer: yes, in many cases.

The AI Act has an extraterritorial scope: it applies to providers and deployers of AI systems that have their place of establishment or are located in a third country if the output produced by the AI system is used in the EU.

United Kingdom: While the UK is no longer bound by EU law directly after Brexit, the Act’s extraterritorial scope means many UK companies must comply when their AI systems affect EU markets or citizens. A UK firm building a hiring algorithm used by EU employers, for example, is almost certainly in scope.

Switzerland: Although Switzerland is not an EU member, Swiss companies that deploy AI systems whose outputs affect EU users are subject to the same extraterritorial logic. Switzerland’s bilateral agreements with the EU do not exempt it from the Act’s market-location principle.

Northern Ireland: Northern Ireland remains part of the UK post-Brexit. UK law applies, and the EU AI Act does not apply directly. However, Northern Irish businesses selling AI products or services into the EU market will be caught by the Act’s extraterritorial provisions on the same basis as any other UK company.

The combination of worldwide business operations and the Act’s broad extra-territorial scope is expected to lead to it becoming a de facto global standard for AI regulation.

AI Act Extraterritorial Compliance Checklist | EU AI Act extraterritorial compliance guide

Is the EU AI Act the First of Its Kind?

Is the EU AI Act the first of its kind? Is the EU AI Act binding?

The AI Act is the first-ever comprehensive legal framework on AI worldwide, aiming to foster trustworthy AI in Europe. While other jurisdictions — notably the US, China, and Canada — have published AI strategies, guidelines, or sector-specific rules, none had enacted a comprehensive, horizontally applicable, legally binding AI law before the EU.

Yes, the EU AI Act is fully binding. It is an EU Regulation, not a Directive, meaning it has direct legal effect in all 27 EU Member States without requiring national transposition. Every business in scope must comply — there is no opt-out.

Frequently Asked Questions

When was the EU AI Act adopted?

The European Parliament adopted the EU AI Act in April 2024. It was published in the Official Journal of the European Union on 12 July 2024 and entered into force on 1 August 2024.

Is the EU AI Act in force?

Yes. The EU AI Act entered into force on 1 August 2024. Its first provisions — banning prohibited AI practices — became enforceable from 2 February 2025. GPAI obligations applied from August 2025. The majority of high-risk AI obligations apply from August 2026.

When does the EU AI Act fully take effect?

The EU AI Act will be fully applicable from 2 August 2026 for most provisions, with an extended deadline of 2 August 2027 for high-risk AI systems embedded in regulated products like medical devices.

Does the EU AI Act apply to the UK?

Yes. The Act’s extraterritorial scope means many UK companies must comply when their AI systems affect EU markets or citizens, despite Brexit.

Does the EU AI Act apply to Switzerland?

Switzerland is not an EU member, but Swiss organisations whose AI systems produce outputs used in the EU fall under the Act’s extraterritorial scope under the market-location principle.

What is the maximum fine for violating the EU AI Act?

The maximum financial penalty for non-compliance with the EU AI Act’s rules on prohibited uses of AI is the higher of an administrative fine of up to €35 million or 7% of worldwide annual turnover.

When was the EU AI Act implemented — and does it apply to Northern Ireland?

The Act entered into force 1 August 2024, with obligations rolling in from February 2025 through August 2027. Northern Ireland is part of the UK and therefore not directly bound, but organisations in Northern Ireland that provide AI systems used by EU users may fall within the extraterritorial scope.

Conclusion

The EU AI Act summary presented in this article reveals a law that is simultaneously ambitious and pragmatic. It does not try to regulate every algorithm on the planet. Instead, it draws a precise boundary: the higher the risk to human rights and safety, the stricter the rules.

For businesses, the most actionable takeaways from the EU AI Act are threefold:

- Classify your AI systems now. The EU AI Act risk categories — unacceptable, high, limited, and minimal — determine everything that follows. Get this wrong and you face the wrong compliance roadmap, or worse, you deploy a banned application without realising it.

- Do not assume geography protects you. Whether you are in London, Zurich, or Chicago, if your AI system’s outputs reach EU users, the Act applies to you. The EU AI Act high risk provisions and the penalty regime have extraterritorial reach by design.

- Map your timeline against August 2026. Most EU AI Act obligations for high-risk systems kick in on 2 August 2026. That deadline is closer than it appears. Start your risk assessment, documentation, and human oversight frameworks today.

For organisations who want a deeper dive into their specific obligations, consult the official EU AI Act Single Information Platform and seek qualified legal counsel familiar with EU technology regulation.

Corrections Policy: We are committed to factual accuracy. If you identify an error or a claim that requires updating, please contact our editorial team. We review and update all regulatory content on a rolling basis as legislation and implementation guidance evolves.

Sources:

- Mayer Brown (2024). EU AI Act Published: Which Provisions Apply When? https://www.mayerbrown.com/en/insights/publications/2024/07/eu-ai-act-published-which-provisions-apply-when

- White & Case LLP (2024). Long awaited EU AI Act becomes law. https://www.whitecase.com/insight-alert/long-awaited-eu-ai-act-becomes-law-after-publication-eus-official-journal

- DLA Piper (2025). Latest wave of obligations under the EU AI Act take effect. https://www.dlapiper.com/en-us/insights/publications/2025/08/latest-wave-of-obligations-under-the-eu-ai-act-take-effect

- European Commission — Digital Strategy (2024/2025). AI Act. https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

- Future of Life Institute — EU AI Act Resource Hub. High-level summary of the AI Act. https://artificialintelligenceact.eu/high-level-summary/

- Future of Life Institute — EU AI Act Resource Hub. Implementation Timeline. https://artificialintelligenceact.eu/implementation-timeline/

- eyreACT (2025). Does the EU AI Act Apply to the UK? https://www.eyreact.com/ai-act-uk/

- Freshfields (2024). EU AI Act Unpacked — Personal and Territorial Scope. https://technologyquotient.freshfields.com/post/102j7cj/eu-ai-act-unpacked-3-personal-and-territorial-scope